The Automation of Curiosity

Every other week I come across yet another article purporting new ways to garner “insights” about consumers, markets, employees, or any other segment companies care about.

It is as if these insights are an enigma that all companies are on a quest to understand. What if I told you there is no magic recipe? All it takes is a simple principle that is often overlooked: being curious.

Before I explain further, let me share some background about me which is relevant to the bigger story here. I have been in the data analysis space for over fifteen years, long before it became a sexy buzzword. I have worked in the corporate sector, with governments and NGOs, and taught research at the university level.

Throughout my career I have had some big wins. I was able to uncover insights enabling companies to solve consumer related challenges they weren’t even aware of, help organizations figure out how to successfully scale initiatives through data-driven decision making, even prevent a major lawsuit for a large corporation, and everything in between.

Those wins weren’t because of some special skillset within my technical abilities. I knew people who were a lot smarter than me, better trained, and with more experience. I had one advantage that gave me the upper hand, an innate curiosity and an addiction to the journey of exploration.

What that meant for the datasets that I dealt with, consumer related or otherwise, is that I left no stone unturned. I manually conducted a surgical level of analysis.

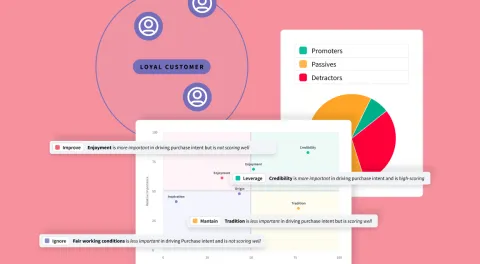

I segmented the data in every way possible.

I then segmented the segments.

I controlled for every variable available. Then saw how my outcome variables were influenced, through regression models, correlations, or significant testing.

I reduced the analysis to the most meaningful unit.

If it meant I needed to run 100 regression models controlling for all variables to see if geographic location in the United States was a predictor of consumer behavior, that’s what was done.

Why 100 models and not 50?

If there were two variables for gender, male and female, and 50 U.S. states, in order to know for sure whether gender had an influence on consumer behavior in each state, 100 models it was.

That was only the beginning.

Imagine if you had variables for race, or job type, or others, the number of permutations grows into the thousands.

Certainly this was not something I could do manually, or even with the help from the team of data scientists I managed.

To the extent time allowed, before a presentation to a board of directors or a client representative, I conducted as many analyses as possible because I was genuinely curious to find out how the results changed or didn’t.

Impractical?

It was.

Guess what happened in the process? Insights were uncovered that companies were desperate to have and needed to grow their businesses. Major strategy decisions were made, findings were presented that changed the direction of lawsuits against Fortune500 companies, and people started to take notice.

A gift and a curse.

That gift of curiosity also became a curse as the demands on my time increased. The workload felt unbearable at times. This wasn’t sustainable. There had to be a way to automate some of my work. There were many sleepless nights before the proverbial light bulb went off in my own head.

Fast forward to a few years ago when I left the corporate world behind. With the help of a co-founder and ironically, more sleepless nights, we set out to build a platform for the old me.

The bright idea.

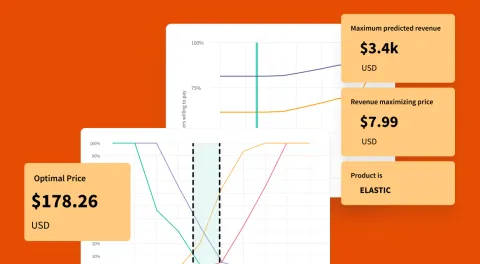

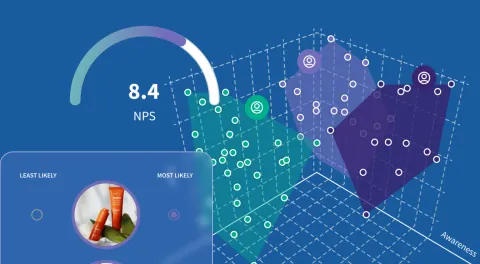

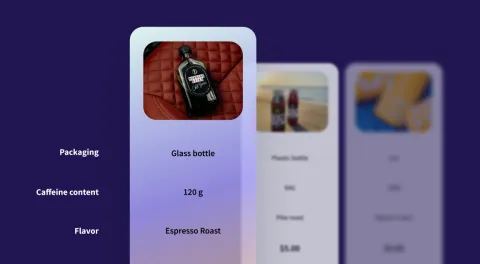

The vision, use the power of available technologies, machine learning, and artificial intelligence, to instantly uncover deep insights about human psychology – how people feel, act, and think.

Armed with that, we can make close to real-time strategic decisions informed by real statistical data and analysis of written feedback at scale.

Bringing the most important and relevant insights to life for our clients allows not only us, but them to sleep more too.

For the longest time I thought we were in the business of automating analysis, until it hit me, we are automating curiosity.

"Only with curiosity we can move the needle towards meaningful insights."

Time is the only commodity we never get back in life. Being able to give time back to our users so they can focus on growing their businesses with data based decisions is our metric of success.

No need to bookmark this post-the SightX platform will handle this for you!

No need to bookmark this post-the SightX platform will handle this for you!