If you work in the market research and insights space, you've likely come across the terms "significantly different", "confidence level", or "margin of error" used to describe and compare data sets.

And for good reason!

Significance testing is one of the most widely used tools by research and insights pros to better understand whether the differences they see between segments (groups of data) are statistically significant or simply just noise in the data.

Today, we'll be exploring the ins and outs of significance testing and giving you some ways to more easily use it in your own research!

What is Significance Testing in Market Research?

In market research statistic significance is used when comparing sets of data. It's a measure that allows you to understand whether those differences you're seeing are truly "significant", or if they are caused by a random sampling error.

Why Significance Testing Matters in Market Research

There will always be variations in your data. Even if you run identical studies hours apart- chances are, you're results would not look exactly the same.

Significance testing allows you to understand if those variations are notable or if they are merely chance.

For example, let’s say you’re running a concept test for your upcoming ad campaign. After exposing respondents to your ads, you ask them how likely they are to purchase your product. But once the data rolls in, these are your results: 64% of respondents were likely to buy your product after viewing the first ad, while 72% said the same thing after seeing the second ad.

So, you ask yourself, is that 8% difference meaningful?

This is precisely where significance testing comes in to play.

What Significance Testing in Market Research Does Not Do

The term "statistically significant" is often misunderstood, causing it to be applied to things that cannot actually be statistically significant (like an entire survey, for example).

If a set of data has "low significance" that doesn't necessarily mean that what you tested is bad for your product or brand.

A Few Significant Terms to Know

P-Values

To fully understand any of the significance tests you run, you'll need to be able to read the outputs, also known as p-values.

These are values between 0 and 1 that tell you whether the results are statistically significant or random chance. The closer the value is to 0, the greater its significance.

So, a p-value of 0.01 would mean there is only a 1% chance that the differences you see are from a random error. Or put plainly, you can be 99% sure that the results reflect significant differences.

Confidence Levels

We all know that nothing in life is 100% certain. But, because our insights crucially guide branding, product, and marketing strategies, we need to be as close to 100% certain as one can be.

When calculating statistical significance, you are doing so at a specific confidence level.

That confidence level shows you just how sure you can be that the results are indeed significant. So, if you choose a confidence level of 95%, that means you can know with 95% certainty that your results are statistically significant.

We always suggest using the industry standard 95% as the confidence level default. However, you can change your confidence level when needed in the SightX platform.

We always suggest using the industry standard 95% as the confidence level default. However, you can change your confidence level when needed in the SightX platform.

Types of Significance Testing

There are many types of significance tests you can run depending on the outputs you want, the data you have, and what exactly you're comparing. All this to say- things can quickly get confusing, even for those familiar with the topic.

So, to keep things simple, we'll discuss the main types of significance testing we use on the SightX platform, helping you better understand what happens on the backend.

Researchers use Analysis of Variance (ANOVA) when investigating whether there is a significant difference between three or more groups of data. You might use ANOVA to understand whether age, gender, and region are related to how much someone spends on your products. But it's important to note- this test will only tell you whether or not a significant difference exists within the data set.

On the other hand, Chi-Square is used to examine the difference among two categorical variables. You might use a Chi-Square test to learn whether income level and preference for a specific brand are related. But similar to ANOVA, Chi-Square tests will only tell you IF there is a significant difference.

If your ANOVA or Chi-Square test indicates the presence of a significant difference, then you've got to find out where it is in your data. Using a Post-Hoc test, you can quickly see which sets of data have significant differences.

If all of this sounds a bit overwhelming- you're not alone! And it's precisely why we automated the entire process.

Significance Testing Made Simple with SightX

While we love manual math as much as the next insights pro, we’ve significantly (😉) simplified the process.

With our automated significance testing, you can quickly evaluate whether the differences in your consumer data are significant or could have occurred by chance. In your analysis dashboard, open the toolbox and select the yellow Significance Testing icon, choose your confidence level, and click “apply”.

.gif)

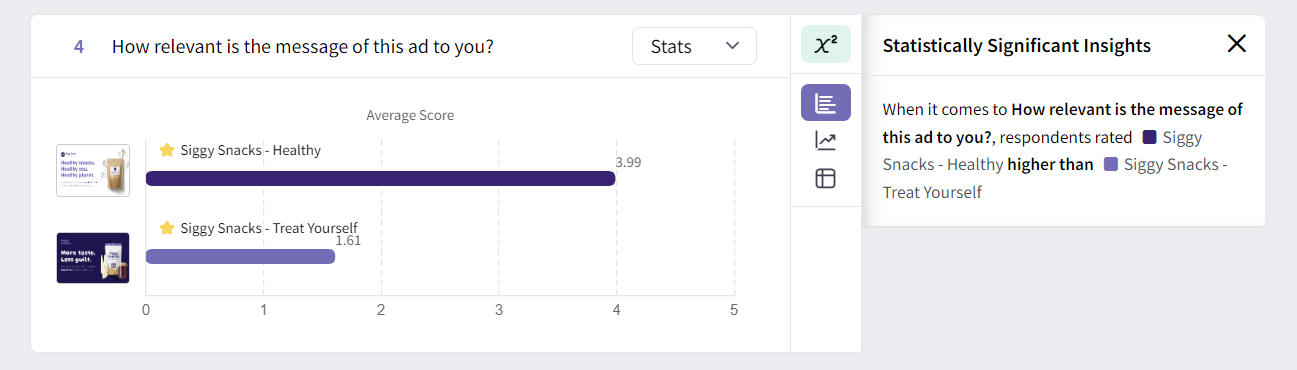

All of the visualizations will automatically update with the corresponding significance testing data. The options with statistically significant data will appear with a star, and the significant insights are displayed to the right.

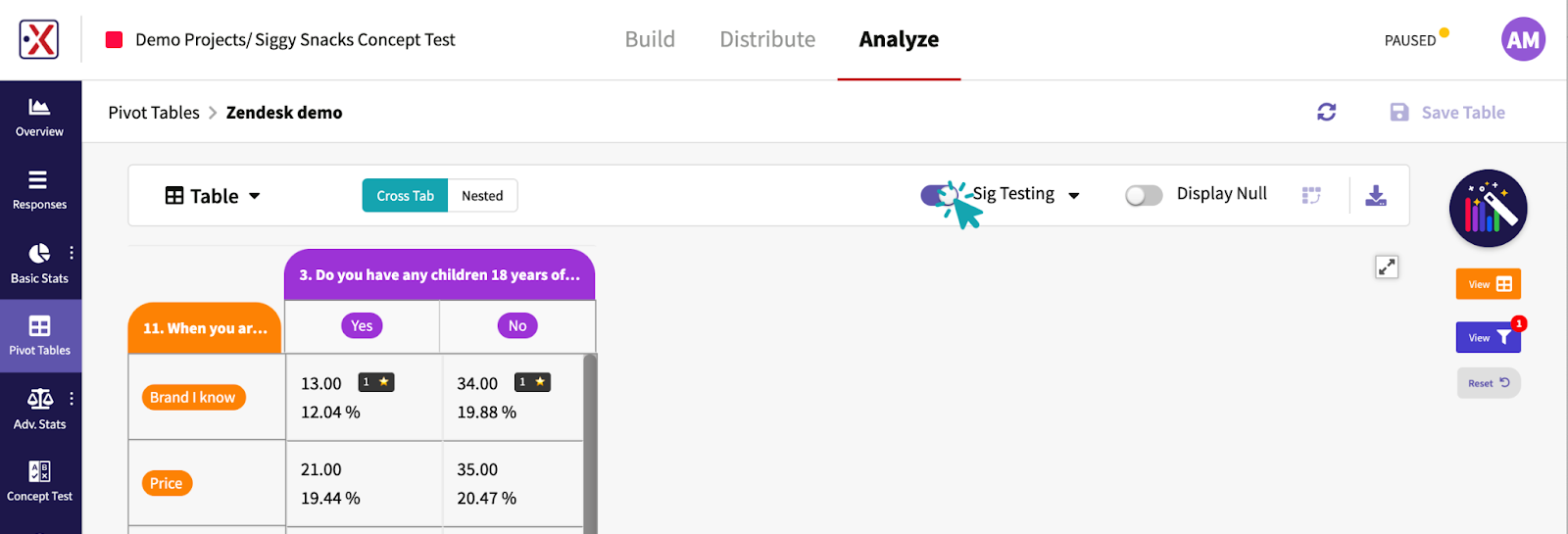

And, for your cross tabs and pivot tables, automated significance testing is just as simple. Once you’ve generated your table, simply toggle the “Sig Testing” option at the top of the page to get immediate insights on which of your data points are statistically significant.

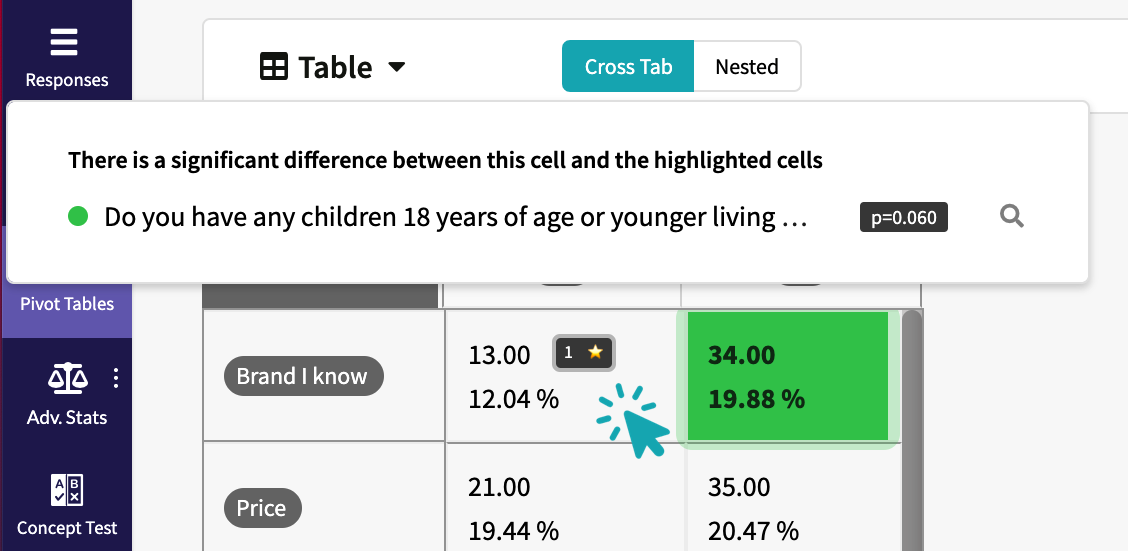

Plus if you’re looking for more details, you can hover your cursor over the cell with a significance indicator (⭐) to see p-values and which cells are significantly different from the cell you're hovering over.

As you can see in the example above, our p-value is 0.060. This means that we can be 94% confident that our data is statistically significant, and there is only a 6% chance that the difference is due to chance.

Getting Started with Significance Testing

If you’re ready to level up your insights, we’ve got the tools to make it happen!

The SightX platform is the next generation of market research tools: a single unified solution for consumer engagement, understanding, advanced analysis, and reporting.

Whether you are ready for a total DIY experience or prefer some support and guidance- we’ve got you covered. Start a free trial today!